Valid Eval

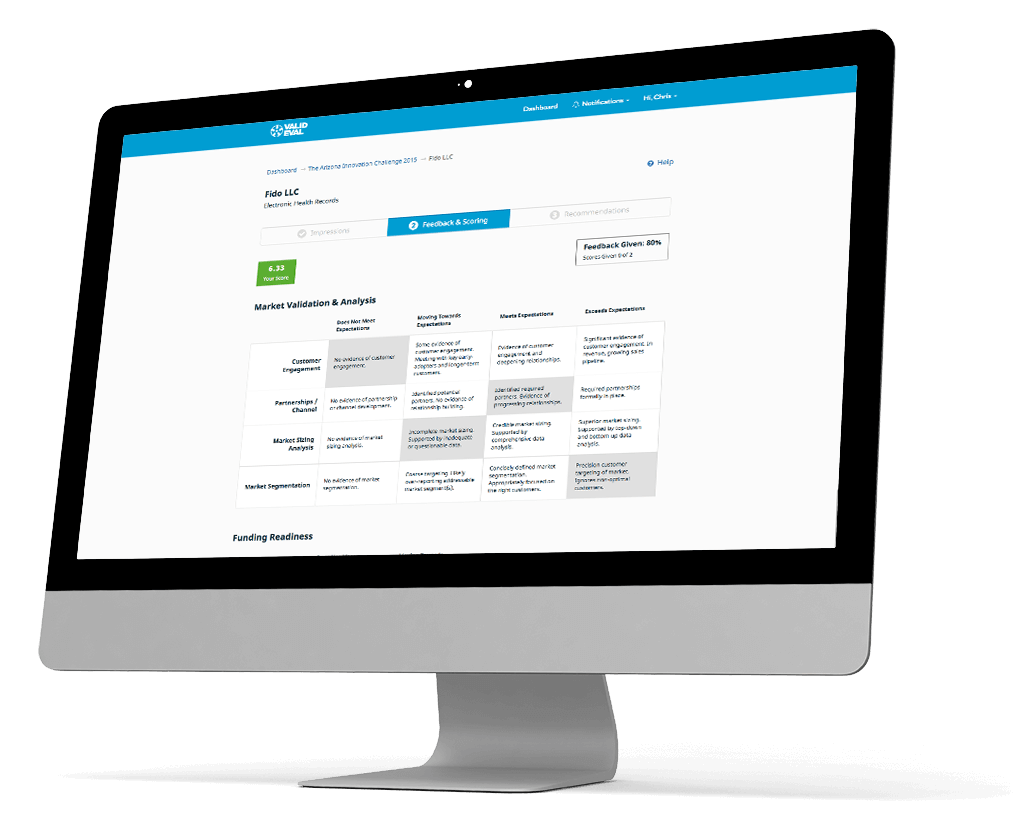

Valid Eval is designed for organizations that must make and defend tough decisions. The system is easy to use, minimizes bias, and provides highly useful feedback to participants. It surfaces and organizes the normally hidden criteria used in complex evaluations, increasing evaluator accountability and support for outcomes.

Valid Eval was introduced to Chromedia after a bad experience with a development team that ended up delivering an expensive mess of code and an unstable structure. The product needed some key new features to continue their growth.

Project Overview

Our first task was to analyze the existing code. After a quick glance, it was apparent to us and to Valid Eval’s independent technical advisors that the code was incapable of being refactored. The entire codebase needed to be rewritten with an entirely new structure but Valid Eval had a deadline and a budget. A complete rebuild was not an option. Additionally, Valid Eval didn’t want to waste time and money building upon code that was going to be scrapped soon. Our team of developers had the idea to create the new features that would be called via APIs from the legacy code. This allowed the new code to interact with the legacy system and be used in the future rewrite.

Key Services Provided

System Architecture

UI/UX Design

Web Development

Quality Assurance

Our Approach

Touched the legacy code as little as possible.

Took an active role in defining the use cases and assisted in developing the user interface.

Highlights

- A happy client, despite the challenges and speed bumps.

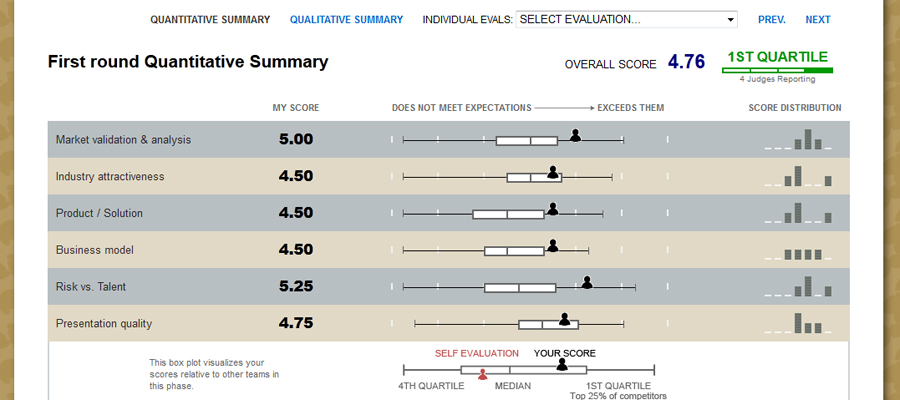

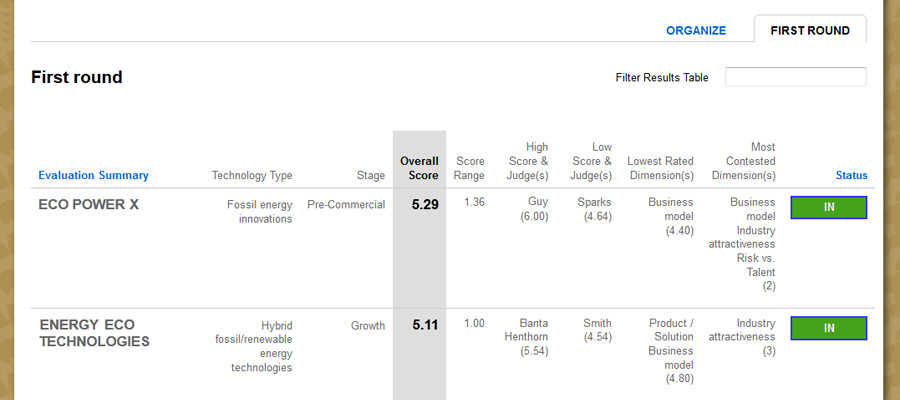

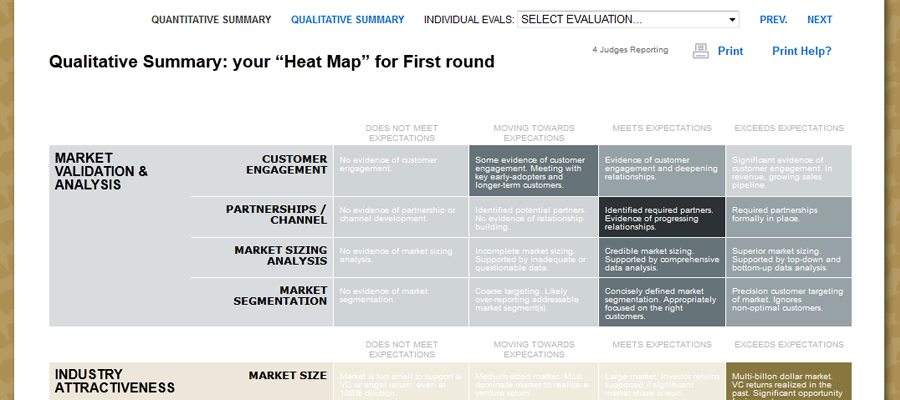

- Developing new systems that make the experience of Valid Eval’s customers significantly better.

Challenges

- The project had numerous, unforeseen challenges for both the Chromedia team and for Valid Eval. Unfortunately, this was a project that went twice as long and twice as expensive as first expected. Fortunately, Valid Eval was with us every step of the way and understood the minefield complexity of their legacy code.

- Adding significant new features to an existing system with active users is a challenge for anyone to step into and understand all the complexities involved. Both Chromedia and Valid Eval missed many of the use cases and user stories associated with the new features that were planned. The launch of the new features depended on “must have” features causing the scope to creep.